Lecture 8 - Data Splits, Models & Cross-Validation | Stanford CS229: Machine Learning (Autumn 2018)

Bias and Variance in Machine Learning

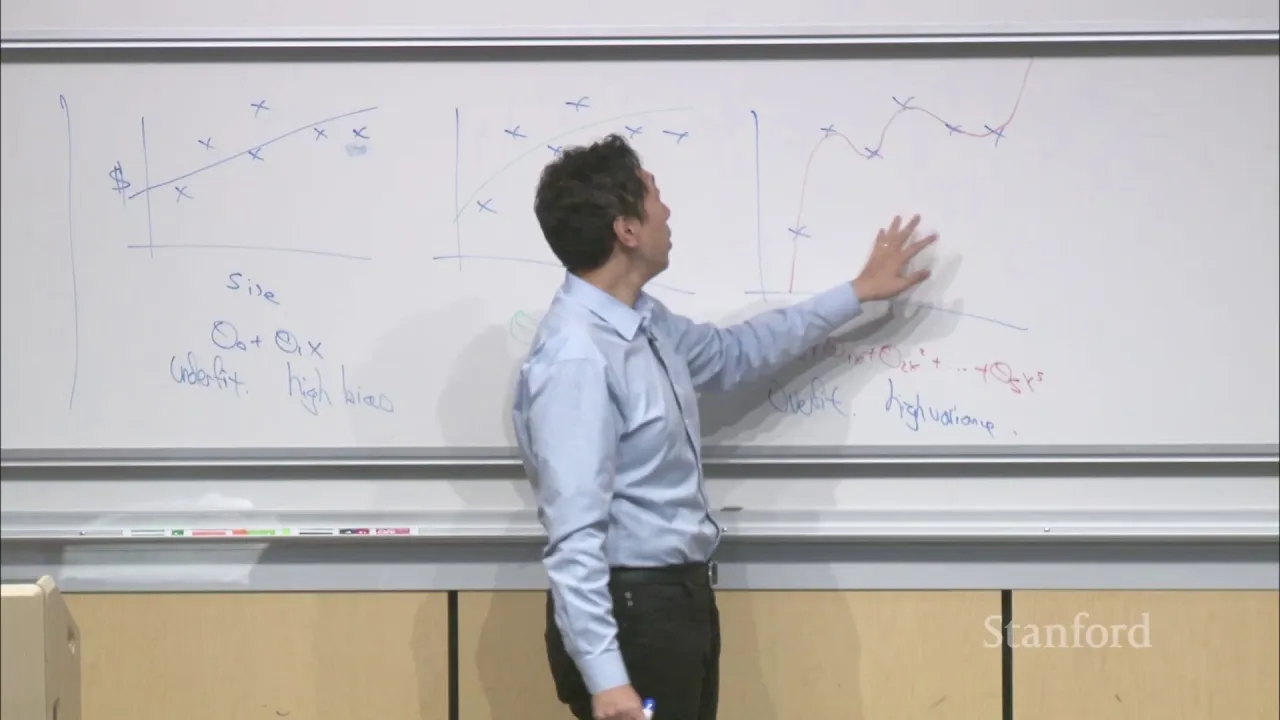

In this section, the speaker discusses the concept of bias and variance in machine learning algorithms. They explain that while bias has multiple meanings in English, in machine learning it refers to strong preconceptions or assumptions made by a learning algorithm. The speaker also introduces the concept of overfitting and high variance.

Understanding Bias and Variance

- The term "bias" has different meanings in English language and machine learning.

- In machine learning, bias refers to strong preconceptions or assumptions made by a learning algorithm.

- This is different from undesirable biases like racial or gender bias that we want to avoid in society.

- Overfitting occurs when an algorithm fits the training data too closely, resulting in high variance.

- High variance means that small changes in the input data can lead to significantly different predictions.

Regularization as a Technique for Preventing Overfitting

In this section, the speaker introduces regularization as a technique for preventing overfitting. They explain that regularization is widely used in many machine learning models and can be added to optimization objectives.

Regularization for Preventing Overfitting

- Adding more features or using high-dimensional feature spaces can lead to overfitting.

- Regularization is an effective way to prevent overfitting.

- Regularization involves adding an extra term to the optimization objective with a regularization parameter (Lambda).

- Regularization is widely used in many machine learning models.

The transcript provided does not have enough content for additional sections.

New Section

In this section, the speaker discusses logistic regression and the importance of regularization in fitting parameters.

Logistic Regression and Regularization

- Logistic regression performs well when the number of examples is on the order of the number of parameters to be fitted.

- Without regularization, it is recommended to have a sufficient number of examples to fit all parameters effectively.

- When using regularization, even with a small number of examples, it is possible to fit a large number of parameters.

- Regularizing per parameter would require choosing individual Lambdas for each parameter, which can be challenging and impractical.

- Pre-processing steps like scaling features to a similar range can help ensure that different Lambdas are on a similar scale.

- Scaling features also helps gradient descent run faster and improves algorithm performance.

New Section

In this section, the speaker addresses questions about support vector machines (SVM) and bias-variance tradeoff.

Support Vector Machines and Bias-Variance Tradeoff

- SVM does not suffer from overfitting because it separates data with a large margin, which belongs to a low complexity class of functions.

- The class of functions that separate data with a large margin has low VC dimension, making overfitting less likely.

- Models with high bias tend to underfit while models with high variance tend to overfit.

- High bias refers to models that are too simple and cannot capture complex patterns in the data. High variance refers to models that are overly complex and fit noise in addition to the underlying patterns.

New Section

In this section, the speaker discusses the implementation and interpretation of regularization.

Implementation and Interpretation of Regularization

- Regularization is implemented by adding a penalty term on the norm of the parameters.

- Another way to think about regularization is in terms of maximum likelihood estimation on a generalized linear model.

- The regularization algorithm can be viewed from a similar perspective as linear regression with squared error minimization.

New Section

In this section, the speaker discusses the arg max over Theta of P of S given Theta times P of Theta in the context of logistic regression.

Maximizing Probability with Logistic Regression

- The denominator is a constant, so the expression becomes arg max over Theta of P of S given Theta times P of Theta.

- Logistic regression uses this expression as its first term.

- The second term represents P of Theta in a logistic regression model or any generalized linear model.

New Section

This section explores the assumption that P of Theta follows a Gaussian distribution and its implications for regularization techniques.

Gaussian Prior for P of Theta

- Assuming P of Theta is Gaussian with mean 0 and variance tau squared i, we can express it as 1 over root 2 pi times the determinant of tau squared i, multiplied by e to the negative theta transpose times tau squared i inverse.

- By plugging this prior into the expression and performing calculations such as taking logs and computing maximum a posteriori estimation, we obtain the regularization technique discussed earlier.

New Section

This section compares frequentist and Bayesian approaches to statistics and their views on estimating unknown parameters.

Frequentist vs Bayesian Statistics

- Frequentist statisticians aim to find the value of Theta that makes the data as likely as possible through maximum likelihood estimation. They view Theta as an unknown true value that generated the data.

- Bayesian statisticians consider prior beliefs about how data is generated before observing any actual data. They use a probability distribution denoted by P of Theta, such as the Gaussian prior discussed earlier.

- The goal in Bayesian statistics is to find the value of Theta that is most likely after observing the data, known as maximum a posteriori estimation (MAP).

New Section

This section discusses the difference between regularized and non-regularized approaches in both frequentist and Bayesian statistics.

Regularization in Statistics

- In both frequentist and Bayesian statistics, regularization can be used. In frequentist statistics, regularization is added as part of the algorithm without deriving it from a Bayesian prior.

- The choice between regularized and non-regularized approaches depends on the specific problem and preferences. As a machine learning engineer, practicality and effectiveness are often prioritized over philosophical debates.

New Section

This section explores the relationship between model complexity and training error when regularization is not applied.

Model Complexity and Training Error

- Increasing model complexity, such as using higher degree polynomials, tends to improve training error without regularization. A higher degree polynomial can fit data better than a lower degree one.

New Section

In this section, the speaker discusses the concept of underfitting and the need for large test sets to measure small differences in algorithm performance.

Underfitting and Small Differences in Algorithm Performance

- The speaker explains that fitting a linear function can result in underfitting.

- To measure small differences in algorithm performance, a large amount of data is required.

- If algorithms have a small difference in performance (e.g., 0.01%), a significant amount of data is needed to distinguish between them.

- Choosing dev and test sets that are big enough allows for meaningful comparisons between different algorithms.

- With a smaller number of examples (e.g., 100), it becomes difficult to measure very small differences in algorithm performance.

- The recommendation is to choose dev and test sets that provide enough data to observe expected differences in algorithm performance.

New Section

In this section, the speaker emphasizes the importance of having large test sets when comparing different algorithms with larger performance differences.

Importance of Large Test Sets for Comparing Algorithms

- When comparing algorithms with larger performance differences (e.g., 2% or 1%), a smaller number of examples (e.g., 1,000) may be sufficient to distinguish between them.

- It is crucial to choose dev and test sets that are big enough to observe meaningful differences in algorithm performance.

- Having more data helps distinguish very small differences (e.g., 0.01%) between algorithms.

- For very large datasets, the percentage of data allocated for dev and test tends to be much smaller compared to training data.

New Section

In this section, the speaker introduces hold-out cross-validation as a procedure for choosing train, dev, and test sets.

Hold-Out Cross-Validation

- The procedure of splitting the dataset into train and dev sets is called hold-out cross-validation.

- This procedure is used to choose the model of polynomial, regularization parameter Lambda, or other parameters.

- The dev set is sometimes referred to as the cross-validation set.

- It is common to split the dataset into a training set and a dev set when developing a learning algorithm.

New Section

In this section, the speaker discusses the importance of not making decisions based on test set performance and how repeated evaluation on the test set can be acceptable for tracking performance over time.

Test Set Usage and Evaluation

- It is crucial not to make decisions about a model based on test set performance to maintain an unbiased estimate.

- Repeatedly evaluating an algorithm on the test set without making decisions based on it is acceptable.

- Tracking team performance over time using evaluations on the test set can be done by reporting results without influencing decision-making.

- Evaluating algorithm performance on a separate test set provides insights into its overall effectiveness.

New Section

In this section, the speaker explains how to define train, dev, and test sets for very small datasets.

Defining Train, Dev, and Test Sets for Small Datasets

- For small datasets (e.g., 100 examples), it becomes challenging to allocate data for train, dev, and test sets effectively.

- The focus in this section is primarily on splitting data into train and dev sets. The separate test set is not considered at this point.

Working with Healthcare Data Sets

The speaker discusses working with data sets in the healthcare industry.

Working with Small Data Sets

- When dealing with small data sets, it is acceptable to fit a linear regression model multiple times.

- This approach is suitable when the number of examples (m) is less than 100.

- However, if m is larger, it is recommended to use k-fold cross-validation instead.

Correlation in Cross-Validation Estimates

- In k-fold cross-validation, the estimates obtained are correlated because most of the training data overlaps.

- Research has shown that these correlated estimates do not provide a worse estimate of training error.

- However, measuring variance based on these estimates may not be reliable due to their high correlation.

Applicability in Deep Learning

- Using k-fold cross-validation in deep learning depends on the size of the training set and complexity of the neural network.

- If there is a small training set or a relatively simple neural network, k-fold cross-validation can be considered.

- However, for deep learning algorithms that require extensive training time or larger datasets, other techniques like transfer learning or feature heterogeneity should be explored.

Evaluating Performance using Cross-Validation

The speaker explains how to evaluate performance using k-fold cross-validation and addresses questions related to this topic.

Estimating Error through Averaging

- In 10-fold cross-validation, each iteration provides an estimate of error for a specific classifier.

- These individual errors are averaged to obtain an overall estimate for the performance of a classifier with a certain degree polynomial.

Variance Measurement

- The 10 estimates obtained from k-fold cross-validation are correlated due to overlapping training data.

- While it is possible to measure variance based on these estimates, they are not considered trustworthy due to their high correlation.

Applicability in Deep Learning

- Using k-fold cross-validation in deep learning is less common due to the time-consuming nature of training neural networks.

- If the training set is small (e.g., 20 examples), other techniques should be considered to improve performance, such as transfer learning or feature heterogeneity.

Averaging Test Errors and Feature Selection

The speaker discusses averaging test errors and briefly touches on feature selection.

Averaging Test Errors

- In k-fold cross-validation, each iteration provides a test error value for the left-out part of the data.

- These test errors are averaged to estimate the overall error of a classifier with a specific degree polynomial.

Feature Selection

- In scenarios with numerous features, such as text classification with thousands of words, it may be necessary to identify important features.

- Random shuffling is a good default method for sampling data when training and testing on different sets.

- For more information on train-test splits involving different distributions, refer to the book "Machine Learning Yearning" by Andrew Ng.

Importance of Feature Selection

The speaker emphasizes the importance of feature selection in machine learning tasks.

Identifying Important Features

- When dealing with datasets containing many features, it is crucial to determine which features are relevant.

- For example, in text classification with thousands of words, certain words may not contribute significantly to the classification task.

- Proper feature selection can help improve model performance and reduce computational complexity.

Finding a Subset of Useful Features

In this section, the speaker discusses the importance of finding a small subset of features that are most useful for a given task.

Identifying Useful Features

- It is crucial to identify a small subset of features that are most relevant and useful for the task at hand.

- By focusing on a smaller set of features, it becomes easier to analyze and extract meaningful insights from the data.

- This process helps in reducing complexity and improving the efficiency of algorithms or models used for the task.

Benefits of Feature Selection

- Feature selection allows us to eliminate irrelevant or redundant features, which can lead to better performance and interpretability.

- It helps in reducing overfitting by preventing models from learning noise or irrelevant patterns present in the data.

- With fewer features, computational resources required for training and inference can be significantly reduced.

Techniques for Feature Selection

- Various techniques can be employed for feature selection, such as statistical tests, correlation analysis, information gain, or regularization methods.

- The choice of technique depends on the nature of the data and the specific requirements of the task.

Considerations for Feature Selection

- When selecting features, it is important to consider domain knowledge and expertise to ensure that relevant information is not overlooked.

- Regular evaluation and validation should be performed to assess the impact of feature selection on model performance.

The timestamp provided corresponds to 1 hour, 17 minutes, and 58 seconds into the video.