But how do AI images and videos actually work? | Guest video by Welch Labs

Understanding Diffusion Models in AI

The Connection Between AI and Physics

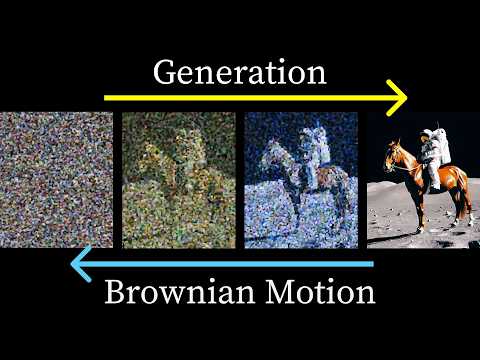

- Over recent years, AI systems have excelled at converting text prompts into videos, leveraging a deep connection to physics.

- This generation of models utilizes a process called diffusion, akin to Brownian motion but with time reversed and operating in high-dimensional space.

Practical Application of Diffusion Models

- An example video generated by the open-source model WAN 2.1 illustrates how prompts can be modified to influence video content.

- The video generation starts with random pixel intensity values, which are then refined through multiple iterations using a transformer model similar to those used in language processing.

Iterative Refinement Process

- The transformation from noise to structured video occurs over several iterations, showcasing how the model gradually shapes the output into realistic visuals.

Exploring CLIP: A Key Model for Understanding

- The discussion will explore OpenAI's 2021 paper on CLIP, which consists of two models (language and vision), trained to create a shared representation between words and images.

- While learning this shared representation is crucial, it alone does not suffice for image generation; understanding the diffusion process is also essential.

Training Methodology of CLIP

- In 2020, advancements in neural scaling laws led researchers to apply similar methodologies from language modeling to image processing with CLIP.

- CLIP was trained on 400 million image-caption pairs, aiming for similarity between vectors representing matching images and captions.

Contrastive Learning Approach

- The training involves maximizing similarity between corresponding image-caption pairs while minimizing it for non-matching pairs using a contrastive approach.

- Cosine similarity is employed as a metric for measuring vector alignment in high-dimensional space, ensuring that related images and captions are closely aligned while unrelated ones are distanced.

Understanding CLIP and Diffusion Models

The Geometry of Latent Space in CLIP

- The shared vector space, known as latent or embedding space, has unique properties that allow mathematical operations on image concepts.

- By subtracting vectors representing different states (e.g., wearing a hat vs. not wearing a hat), we can derive new vectors that correspond to specific text concepts.

- Testing with common words shows that the top match for the difference vector is "hat," demonstrating how CLIP translates visual differences into vector distances.

- CLIP's method allows impressive image classification by comparing image vectors to potential captions based on cosine similarity.

- However, CLIP only maps images and text to the embedding space; it does not generate images or text from these vectors.

Introduction of Diffusion Models

- In 2020, significant advancements in language modeling occurred alongside the introduction of Denoising Diffusion Probabilistic Models (DDPM).

- DDPM demonstrated high-quality image generation through a diffusion process where noise is incrementally added to training images until they are unrecognizable.

- Contrary to initial assumptions, modern diffusion models do not simply reverse noise addition step-by-step but utilize more complex algorithms for effective training and generation.

Key Insights from Berkeley's Approach

- The Berkeley team's approach involves adding random noise during both training and image generation, which significantly enhances output quality.

- For example, using stable diffusion 2 with DDPM sampling produces high-quality images compared to removing noise addition during generation, which results in poor outputs.

Understanding Noise Addition in Image Generation

- Adding random noise while generating images leads to sharper results; this counterintuitive method improves overall quality despite seeming detrimental at first glance.

- Instead of predicting one noisy step back towards clarity, models predict total noise added to an original clean image—this skips intermediate steps and simplifies learning tasks.

Theoretical Foundations of Diffusion Models

- The DDPM paper incorporates complex theories explaining why their algorithms work effectively; further exploration can be found in linked tutorials for deeper understanding.

- Viewing diffusion models as learning a time-varying vector field provides intuitive insights into their functionality and connects them with flow-based models gaining popularity.

Understanding Diffusion Models in Image Processing

Visualizing Pixel Intensity in 2D Space

- The intensity value of each pixel determines its position in a high-dimensional space. By reducing images to two pixels, we can plot their intensity values on a scatterplot: the first pixel on the x-axis and the second on the y-axis. An image with a black first pixel and a white second pixel would be represented at (0, 1) on this plot.

- Real images exhibit specific structures within this high-dimensional space. To facilitate learning for our diffusion model, we can create structured points in a lower-dimensional space, starting with a spiral shape as an example.

The Core Concept of Diffusion Models

- Diffusion models involve adding noise to an image and training a neural network to reverse this process. In our toy dataset, adding random noise corresponds to taking steps in random directions within the scatterplot representation of images. This mimics Brownian motion seen in physical diffusion processes.

- Our model is tasked with reversing these random walks by denoising images, effectively recovering original data structures from noisy representations through learned patterns over many iterations.

Training Objectives and Learning Mechanisms

- In naive diffusion modeling, predicting coordinates involves using the final point of a random walk to estimate previous steps; however, there is inherent randomness that can obscure learning signals. Instead, training should focus on predicting total noise added across all steps rather than step-by-step predictions for better efficiency and reduced variance in training examples.

- The Berkeley team's approach emphasizes predicting vectors pointing back towards original data distributions (score functions), which guide models toward less noisy data while maintaining learning objectives intact despite varying levels of added noise during training phases.

Conditioning Models on Time for Enhanced Learning

- A clever solution involves conditioning models based on time variables corresponding to steps taken during random walks; this allows for more nuanced vector fields that adapt as t approaches zero or one—enabling both coarse and refined learning depending on how much noise has been introduced into the dataset over time.

- Observing time evolution reveals interesting behavior changes as t approaches certain thresholds; notably, vector fields transition from general directions towards specific structures like spirals—indicating potential phase changes within learned representations of data distributions.

Generating Images Using DDPM Algorithm

- The DDPM algorithm generates images by starting at random locations and navigating back toward structured forms like spirals through guided steps influenced by learned vector fields while incorporating scaled random noise along the way—illustrating how controlled randomness contributes to image quality enhancement during generation processes.

Understanding the Role of Noise in Diffusion Models

The Impact of Random Steps on Particle Movement

- The scale of random steps may appear large initially, but as the DDPM algorithm progresses, these steps decrease in size. After 64 iterations, particles exhibit significant movement due to both learned vector fields and random noise, ultimately aligning with a spiral pattern.

- In the reverse diffusion process without noise addition, points converge rapidly towards the center of the spiral rather than spreading out along its edges.

Consequences of Removing Noise Addition

- Removing random noise leads to generated points clustering around the average center of the spiral instead of capturing its full distribution. This results in blurry images since averages tend to lack detail.

- The analogy between a toy dataset and high-dimensional image datasets breaks down; while points still land on a 2D spiral, they fail to represent diverse realistic images.

Mathematical Insights into Mean Learning Behavior

- The model learns to predict the mean or average based on input points and time during diffusion. This is mathematically supported by showing that added Gaussian noise leads to a Gaussian distribution in reverse processes.

- To sample from this learned distribution effectively, zero mean Gaussian noise must be added after each prediction step in DDPM's image generation process.

Early Reverse Diffusion Process Dynamics

- Early in reverse diffusion (when t is close to 1), the model's vector field directs points toward the dataset's average. Adding random noise helps prevent all generated points from clustering at this mean.

Evolution and Adoption of Diffusion Models

- Although DDPM established diffusion models for image generation, it faced challenges due to high computational demands from numerous required steps through large neural networks.

- Subsequent research demonstrated that high-quality images could be generated without adding random noise during generation, significantly reducing necessary steps.

Transitioning from Stochastic to Ordinary Differential Equations

- The DDPM process can be expressed using stochastic differential equations (SDE), where one term represents motion driven by vector fields and another accounts for randomness.

- Utilizing results from statistical mechanics (Fokker-Planck equation), researchers found an ordinary differential equation (ODE) that yields identical final distributions as SDE without randomness involved.

Advantages of DDIM Over Traditional Methods

- The new algorithm derived from ODE resembles DDPM but eliminates random noise addition at each step while adjusting step sizes for better trajectory alignment with vector fields.

- By employing smaller scaling for step sizes within DDIM, trajectories can more accurately follow vector field contours leading to improved image quality compared to previous methods where points clustered near data means.

Diffusion Models and Their Evolution

Transition from DDPM to DDIM

- The DDIM algorithm improves upon the original DDPM by eliminating the need for random steps during model training, allowing for high-quality image generation in fewer steps and with deterministic outcomes.

Understanding Image Distribution

- Both stochastic (DDPM) and deterministic (DDIM) algorithms yield the same final distribution of images, even though individual outputs may differ.

Limitations of Early Diffusion Models

- Despite advancements in generating high-quality images with diffusion models like DDIM, steering the diffusion process using text prompts remained a challenge due to limited capabilities.

Integrating CLIP with Diffusion Models

- The potential exists to reverse the CLIP image encoder using diffusion models, where embedding vectors from CLIP's text encoder can guide image generation towards desired outputs based on textual prompts.

OpenAI's unCLIP Methodology

- OpenAI developed a method called unCLIP (commercially known as DALI2), which effectively uses image-caption pairs to train a diffusion model that adheres closely to input prompts, achieving remarkable detail in generated images.

Conditioning Techniques in Diffusion Models

- To utilize embedding vectors from models like CLIP for guiding diffusion processes, one approach involves conditioning the model on text inputs alongside traditional noise removal training methods.

Enhancing Prompt Adherence

- Conditioning alone does not guarantee prompt adherence; additional strategies are necessary to ensure that generated images align closely with user requests.

Class Information and Model Training

- A more effective strategy involves incorporating class information into training datasets. By labeling data points (e.g., person, cat, dog), models can better navigate towards specific sections of an overall data structure during generation.

Challenges in Class Conditioned Generation

- When attempting class-conditioned generation without sufficient separation between general structure learning and specific class targeting, confusion can arise—illustrated by misclassifications between people and dogs in generated images.

Decoupling Generalization from Specificity

- A solution lies in leveraging both unconditional models (not trained on specific classes) and conditioned ones. This allows for flexibility in generating general vector fields or targeted outputs based on class information provided selectively during training.

Classifier-Free Guidance in Diffusion Models

Understanding Vector Fields and Classifier-Free Guidance

- The data is initially aligned with the average of a spiral, but as time approaches zero, vector fields diverge, indicating different directions for conditioned and unconditioned models.

- By subtracting the gray unconditioned vector from the yellow class-conditioned vector, a new direction emerges that better aligns with cat examples by removing general data directionality.

- This new direction can be amplified using a scaling factor (alpha), allowing for adjustments to guide points more effectively towards desired outcomes.

- Utilizing green vectors instead of original yellow vectors enhances the diffusion process, leading to improved alignment of points on the spiral through classifier-free guidance.

- The same approach applies to dog examples; guided vectors lead to successful convergence on their respective spiral sections, demonstrating effective model training across classes.

Application and Impact of Classifier-Free Guidance

- A third vector field for people also shows good convergence results. Classifier-free guidance has become crucial in modern image and video generation models.

- Adding classifier-free guidance significantly improves output quality; at a guidance scale alpha of around 2, details like trees begin appearing in generated images.

- WAN's video generation model innovates further by employing negative prompts to specify unwanted features, steering outputs away from these elements during diffusion.

- An example illustrates how without negative prompts, generated scenes may appear disjointed or cartoonish due to lack of specific feature control.

Reflections on Model Development

- Since the DDPM paper's release in 2020, rapid advancements have led to sophisticated text-to-video models that integrate complex ideas seamlessly into high-dimensional spaces.

- The ability to use trained text encoders from various sources to influence diffusion processes highlights an impressive synergy between simple geometric intuitions and complex model operations.

Guest Contributions and Future Directions

- This segment features guest creator Stephen Welsh from WelshLabs, known for high-quality educational content including machine learning topics and imaginary numbers series turned into books.

- The host discusses taking paternity leave while commissioning guest videos covering diverse subjects such as statistical mechanics and modern art combined with group theory.