COA |Chapter 04 Cache Memory Part 05 | Direct Mapping بالعربي

Introduction to Cache Memory and Mapping Functions

Overview of Cache Memory

- The speaker introduces the topic of cache memory, emphasizing its importance in computing systems.

- Discussion on mapping functions within cache memory, highlighting their role in data retrieval and storage efficiency.

Understanding Mapping Functions

- Explanation of what mapping functions are, particularly in relation to cache lines and blocks.

- The necessity for defining specific mappings between main memory blocks and cache lines is discussed.

Cache Line Structure and Functionality

Cache Line Characteristics

- Each block in the main memory must be mapped to a specific cache line; this ensures organized data access.

- The concept of multiple blocks being associated with a single cache line is introduced, stressing the need for precise management.

Block Management

- The speaker elaborates on how different blocks interact within the same cache line, including potential conflicts that may arise.

- A detailed example illustrates how data from various blocks can be stored or retrieved simultaneously without overlap issues.

Practical Implications of Cache Management

Performance Considerations

- Discussion on how effective caching strategies can significantly enhance system performance by reducing access times.

- Emphasis on understanding the relationship between block size and available space within cache lines for optimal performance.

Control Mechanisms

- The importance of control bits in managing which blocks are currently active within a given cache line is highlighted.

Understanding Cache and Memory Management

Cache Structure and Functionality

- The discussion begins with the concept of cache lines, emphasizing their role in memory management. It highlights how data is organized within these lines for efficient access.

- The speaker explains the importance of block sizes in cache architecture, noting that different blocks can impact performance based on their arrangement and size.

- A focus on the relationship between cache size and memory efficiency is presented, illustrating how larger caches can lead to better performance but also require careful management.

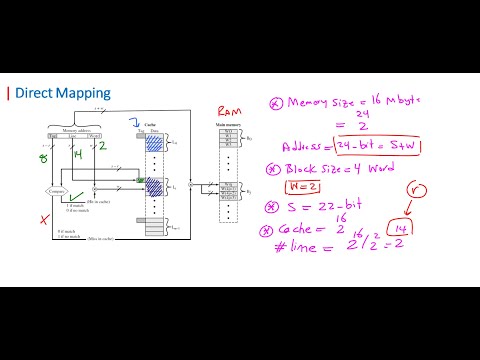

- The speaker introduces a practical example involving cache size calculations, demonstrating how to determine the number of bytes that can be stored based on block sizes.

- An explanation follows regarding memory allocation strategies, particularly how they relate to cache organization and overall system performance.

Memory Size Considerations

- The conversation shifts to memory size implications, detailing how different configurations affect processing speed and data retrieval times.

- There’s a discussion about the balance between main memory size and cache effectiveness, stressing that larger main memories do not always equate to faster processing speeds.

- The speaker emphasizes the significance of understanding both main memory and cache interactions when designing systems for optimal performance.

- A breakdown of address space is provided, explaining how addresses are structured within a system's architecture for efficient data access.

- The importance of addressing schemes in relation to block selection from available options is discussed, highlighting its relevance in programming contexts.

Addressing Techniques

- An overview of addressing techniques reveals their critical role in managing data flow between CPU and memory effectively.

- The speaker discusses various types of addresses used in computing systems, including physical versus logical addresses, which influence how data is accessed during operations.

Understanding Cache and Memory Blocks

Overview of Cache and Memory Blocks

- The discussion begins with the concept of cache memory, specifically focusing on how blocks are structured within it. It raises questions about whether multiple blocks can exist simultaneously.

- The speaker explains that only one block can be active at a time in the cache, emphasizing the limitations of cache memory regarding simultaneous storage.

- A comparison is made between different types of blocks, highlighting their roles in data retrieval and processing efficiency.

Memory Addressing and Block Size

- The importance of memory addressing is discussed, particularly how addresses correspond to specific blocks in memory. This includes an explanation of how block sizes affect overall performance.

- The speaker elaborates on the relationship between block size and data retrieval speed, indicating that larger blocks may lead to inefficiencies if not managed properly.

Data Management Strategies

- Various strategies for managing data within cache are introduced. This includes techniques for optimizing access times and ensuring efficient use of available memory resources.

- A visual representation from a textbook is referenced to illustrate these concepts further, providing a practical example for better understanding.

Performance Metrics

- Performance metrics related to cache efficiency are discussed. These include considerations such as latency and throughput when accessing different types of memory.

- Historical context is provided by referencing advancements in technology since 1985 that have influenced current practices in memory management.

Challenges in Cache Management

- The conversation shifts towards challenges faced in managing caches effectively, including issues related to data consistency and retrieval speeds.