Архитектура ЭВМ Лекция 9: Иерархия хранения данных. Организация кэш памяти.

New Section

In this section, the speaker delves into higher-level concepts related to computer memory and architecture.

Understanding Computer Memory

- The discussion shifts towards higher-level constructs like memory systems and commands.

- Exploring the structure of computer memory and how it functions within a system.

- Differentiating between various memory access architectures such as NUMA (Non-Uniform Memory Access).

- Addressing the uniformity in memory access across different bytes in a system.

New Section

This part focuses on spatial configurations of processors and memory within a computer system.

Spatial Configuration of Processors and Memory

- Illustrating spatial arrangements of processors and memory banks for better understanding.

- Discussing the spatial distribution of processors and memory, highlighting the need for efficient utilization.

New Section

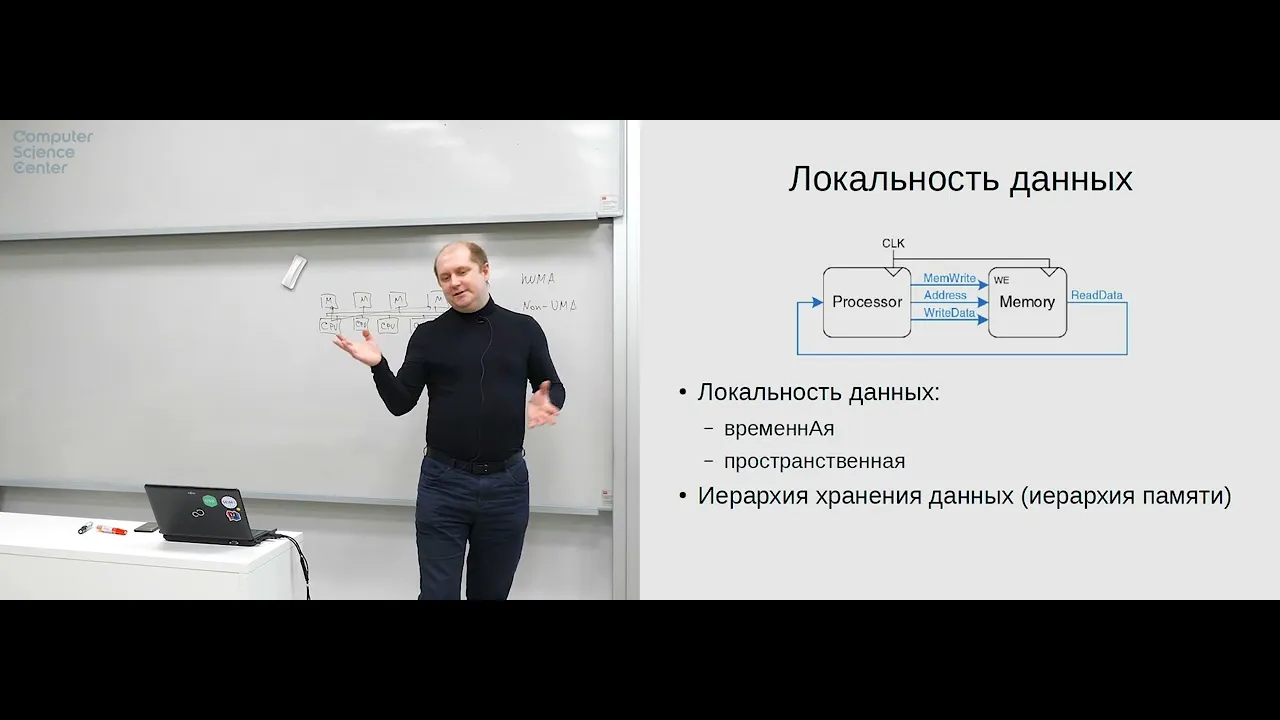

The conversation transitions to optimizing processor-memory interactions through locality principles.

Locality Principles in Optimization

- Highlighting challenges with naive optimization methods that lead to inefficient resource usage.

- Introducing the concept of locality in programming for enhancing speed and efficiency.

New Section

Delving deeper into temporal and spatial locality in program execution for improved performance.

Temporal and Spatial Locality Insights

- Explaining temporal locality through repeated access patterns to specific data elements during program execution.

Understanding Memory Hierarchy in Modern Processors

The speaker delves into the concept of memory hierarchy in modern processors, discussing the hierarchy based on speed and cost, starting from registers to RAM.

Memory Hierarchy Explained

- The hierarchy progresses from faster but more expensive memory like registers to slower but cheaper memory like RAM.

- Processors are manufactured by printing them on a substrate using methods like photolithography, creating semiconductor blocks that handle different types of instructions.

- Different levels of processor cache exist, with varying speeds and costs, leading up to cheaper but slower RAM as part of the memory hierarchy.

- Issues arise with different memory modules operating at various frequencies and being physically separated despite working at the same speed.

Optimizing Memory Access for Performance

The discussion shifts towards challenges in memory access optimization for performance enhancement in processors.

Challenges in Memory Access Optimization

- Memory modules can operate at different frequencies, causing potential delays and inefficiencies due to physical separation.

- Heat dissipation becomes a concern when multiple consumers access memory simultaneously between cores or processors.

Efficient Data Storage Hierarchy

Exploring the data storage hierarchy and its optimization for efficient data handling.

Data Storage Hierarchy Insights

- The data storage hierarchy includes registers, caches, RAM, hard drives (HDD), and even flash storage for persistent data retention.

- Emphasizes the importance of understanding locality to optimize performance without overspending on costly solutions.

Memory Access Strategies

Delving into strategies for optimizing memory access based on time and cache hits.

Strategies for Memory Access Optimization

- Balancing time access and cache hits is crucial for efficient processing without excessive misses or delays.

New Section

In this section, the speaker discusses the three levels of cache memory architecture.

Cache Memory Architecture

- The speaker explains that there are three levels of cache memory: L1 cache, L2 cache, and L3 cache.

- The L1 cache is where significant mistakes occur.

- The L2 cache acts as an intermediary between different levels of caches.

- The L3 cache, considered the third level, incorporates components resembling NUMA architecture.

New Section

This part delves into simple inquiries regarding the topic at hand.

Simple Inquiry

- A straightforward question is posed to initiate further discussion.

- The question sets the stage for exploring more complex concepts related to operational memory and caching mechanisms.

New Section

Here, the focus shifts towards operational memory and potential errors within it.

Operational Memory and Cache Errors

- Operational memory is discussed in relation to caching mechanisms.

- Changes in interfaces are mentioned, leading to a brief exploration of file access through a file interface versus direct memory access.

New Section

This segment elaborates on virtual memory and cache misses in operational memory.

Virtual Memory and Cache Misses

- The concept of virtual memory is introduced alongside discussions on potential errors in operational memory.

- Swap partition as an extension of operational memory with similar interfaces is explained.

New Section

Delving deeper into virtual memory systems and their functionalities.

Virtual Memory Systems

- Further insights are provided on swap partitions and their role in data management between disk storage and operational memory.

- Clarification on how operational memory operates above zero addresses is given.

New Section

Exploring processor caches organization and functionality.

Processor Cache Organization

- Detailed explanations are offered on how processor caches are structured for efficient data retrieval.

- Emphasis on the importance of contiguous data blocks for efficient caching operations is highlighted.

New Section

Discussing the significance of data locality in caching mechanisms.

Data Locality Importance

- Data locality's crucial role in optimizing caching processes is underscored.

- Explanation on why adjacent memory cells should be cached together for improved performance efficiency.

New Section

Unpacking the concept of data locality further for enhanced understanding.

Understanding Data Locality

- Elaboration on how data locality impacts caching efficiency by maintaining consistency with Von Neumann principles.

- Different types of memory mappings like direct mapping are briefly touched upon for comprehensive comprehension.

New Section

The speaker discusses the complexity of memory management and questions the necessity of such intricacy in memory organization.

Understanding Memory Complexity

- The speaker questions the need for complex memory organization and suggests that cutting memory into pieces could simplify processes.

- Despite considering local logic as a good answer, the speaker finds it insufficient, indicating a lack of clarity in understanding.

- By breaking down memory slots into 8 caches, the speaker demonstrates a method to enhance data reading efficiency locally.

New Section

The discussion delves into the implications of segmenting memory and its impact on data retrieval efficiency.

Segmenting Memory for Efficiency

- Exploring how segmenting memory can lead to efficient data retrieval by covering areas sized in powers of two or three.

- The logic behind this segmentation is clarified, emphasizing the importance of understanding these concepts for effective memory management.

New Section

Delving into the complexities of memory allocation and utilization within cache systems.

Memory Allocation Complexity

- Discussing how certain aspects of memory allocation remain unclear, highlighting challenges in utilizing specific sections effectively.

- Exploring byte and bit calculations to interpret addresses efficiently within cache systems, aiming to optimize data access strategies.

New Section

Analyzing address interpretation methods within cache systems for enhanced data processing capabilities.

Address Interpretation Strategies

- Interpreting addresses not solely as linear but incorporating intricate methods for improved data handling.

- Introducing a physical scheme to interpret data efficiently based on cache line positions within the system architecture.

New Section

Implementing innovative structures within cache systems to optimize data storage and retrieval mechanisms effectively.

Optimizing Data Storage

- Demonstrating a structure fitting seamlessly into 32-bit words to enhance data accessibility and processing speed.

Key Concepts in Caching and Memory Management

In this section, the speaker delves into key concepts related to caching and memory management, highlighting the importance of optimizing memory usage efficiently.

Understanding Key Moments

- The speaker emphasizes the significance of taking time to analyze and refine expressions for optimal performance.

- By refining structures, there is an opportunity to enhance flexibility in managing memory regions efficiently.

- The ability to manipulate arbitrary data sets allows for increased flexibility in memory operations.

- Data can be cached from similar sets simultaneously, showcasing advancements in caching capabilities.

- Tagging data sets enables precise identification and allocation within memory regions.

Enhancing Cache Efficiency

This segment focuses on strategies to improve cache efficiency by maximizing data utilization within memory structures.

Exploring Optimization Techniques

- Optimizing memory allocation facilitates efficient utilization of cache space.

- Analyzing different scenarios aids in understanding cache behavior under varying conditions.

- Implementing diverse cache configurations enhances data accessibility and retrieval speed.

Memory Allocation Strategies

The discussion shifts towards exploring diverse memory allocation strategies to enhance system performance.

Unpacking Memory Allocation Approaches

- Associative caching methods offer versatility but come with higher costs due to increased complexity.

- Leveraging spatial locality optimizes data access patterns for improved system performance.

Optimizing Cache Utilization

Delving into techniques that optimize cache utilization for enhanced computational efficiency.

Maximizing Cache Potential

- Efficiently utilizing cache space through strategic data placement boosts overall system performance.

Spatial Locality Considerations

Examining the impact of spatial locality on data access patterns and system efficiency.

Understanding Spatial Locality Effects

New Section

In this section, the speaker discusses the concept of cache and its relevance in data management.

Cache Functionality

- The speaker explains that by optimizing data retrieval processes, it is possible to enhance efficiency and store more data effectively.

- Using the analogy of borrowing books from a library, the speaker illustrates how caching works in storing and accessing data.

- The discussion shifts to the importance of cache in maintaining proximity to frequently accessed data for quicker retrieval.

- Spatial locality support is highlighted as a simple method to increase performance by keeping frequently accessed data close together.

- The concept of spatial-temporal locality emphasizes that recently accessed data is likely to be accessed again soon, influencing caching strategies.

New Section

This section delves into addressing memory locations efficiently through cache mechanisms.

Memory Addressing

- The discussion focuses on memory addressing with an emphasis on efficient access patterns based on recent accesses.

- Sequential reads and write statuses are mentioned as factors influencing caching strategies for optimal performance.

- Various caching strategies are explored based on spatial and temporal locality principles for improved computational efficiency.

New Section

Here, the speaker elaborates on cache mapping techniques and their impact on program execution.

Cache Mapping Strategies

- Direct mapping cache is introduced as a tool supporting efficient memory access for interpreting programs effectively.

- The discussion extends to handling cache misses efficiently by loading batches of data into the cache for seamless operations.

New Section

This part explores the significance of caching in program execution and its implications for processing efficiency.

Program Execution Efficiency

- Caching mechanisms streamline program execution by minimizing delays caused by accessing subsequent lines of code.

New Section

The focus here is on cache optimization strategies and their impact on computational performance.

Cache Optimization

- Optimal cache size considerations are discussed concerning effective storage capacity alignment with program requirements.

New Section

This segment delves into cache size optimization strategies based on access patterns and memory requirements.

Cache Size Optimization

Detailed Explanation of Data Storage Strategies

In this section, the speaker discusses two data storage strategies and highlights their differences through a hypothetical system involving a writer and reader.

Writer's Data Storage Strategy

- The writer initially stores data in memory before transferring it to cache for processing.

- This process involves recording data in memory first before moving it to cache for further actions.

- After writing data to cache, control is returned, allowing the writer to continue other tasks.

Efficiency Comparison of Strategies

- Evaluating the efficiency of algorithms based on speed and reliability.

- Considering which strategy is faster in terms of processing speed.

- Discussing the importance of consistency and reliability in data storage systems.

Synchronous vs. Asynchronous Function Calls

The speaker delves into synchronous and asynchronous function calls, explaining their distinctions and implications within programming contexts.

Synchronous Function Calls

- Synchronous calls involve immediate action upon sending a request.

- Describing synchronous calls as requiring a response before proceeding with further actions.

Asynchronous Function Calls

- Asynchronous calls allow for continued operation without waiting for a response.