Uncertainty - Lecture 2 - CS50's Introduction to Artificial Intelligence with Python 2020

Likelihood Weighting

In this section, the concept of likelihood weighting is introduced as an alternative procedure to avoid discarding samples that do not match the evidence. The process involves fixing the values of evidence variables and sampling the non-evidence variables based on the probability distributions in a Bayesian network. Each sample is then weighted by its likelihood.

Procedure of Likelihood Weighting

- Fix the values of evidence variables and do not sample them.

- Sample all other non-evidence variables using the Bayesian network and their probability distributions.

- Weight each sample by its likelihood, considering how likely the evidence is to occur in that particular sample.

- Likelihood is defined as the probability of all evidence given a specific sample.

- Unlike before where all samples were weighted equally, now each sample is multiplied by its likelihood for a more accurate distribution.

Example of Likelihood Weighting

An example is provided to illustrate how likelihood weighting works in practice.

Sampling Procedure with Fixed Evidence Variable

- Start by fixing the evidence variable (e.g., train is on time).

- Sample from other non-evidence variables (e.g., rain, track maintenance, appointment).

- Generate a sample by fixing the evidence variable and sampling the others.

Weighting Samples Based on Likelihood

- Calculate the weight of each sample based on how probable it is for the evidence to occur given other variable values.

- The weight can be determined using conditional probabilities associated with the evidence variable.

- Repeat this sampling and weighting procedure for multiple samples to obtain a distribution.

Other Sampling Methods

There are various sampling methods available that aim to approximate inference procedures for determining variable values. These methods help in dealing with uncertainty over time and changing values of variables.

Markov Assumption

- The Markov assumption is a simplifying assumption made in probabilistic modeling.

- It states that the current state depends only on a finite fixed number of previous states.

- For example, when predicting tomorrow's weather, we can rely on yesterday's weather as the most recent state.

Modeling Values Over Time

This section introduces the concept of modeling values that change over time and dealing with uncertainty in future predictions.

Introducing Time Steps

- Each possible time step (e.g., day) is associated with a random variable representing the value at that time.

- Variables are defined for each specific time step (e.g., x sub t represents weather at time t).

Handling Large Amounts of Data

- As the analysis spans longer periods, handling large amounts of data becomes challenging.

- Making accurate assumptions about dependencies between previous and current states helps simplify calculations.

Conclusion

The transcript covers the concept of likelihood weighting as an alternative procedure to handle evidence variables. It explains how to fix evidence values, sample non-evidence variables, and weight samples based on their likelihood. An example is provided to illustrate this process. Additionally, other sampling methods and modeling values over time are discussed, including the Markov assumption and handling large amounts of data.

Introduction to Markov Chains

In this section, the speaker introduces the concept of Markov chains and their mathematical foundations in probability theory.

Understanding Probability Theory and Markov Chains

- Probability theory provides a mathematical foundation for analyzing uncertain events.

- Markov chains are a way to model systems that exhibit a certain level of dependence on previous events.

- By using the Markov assumption, we can represent these dependencies mathematically.

Key Concepts in Probability

This section focuses on understanding key concepts within probability theory and their relevance to Markov chains.

The Relationship Between Rainy Days

- When it's raining, there is a tendency for it to continue raining.

- This relationship can be captured using probability concepts.

Using Probability for Inferences

- Probability allows us to make judgments about the world based on available information.

- We can use probability to answer questions like the likelihood of a specific sequence of events occurring.

Representing Ideas with Models

Here, we explore how probability and mathematical models can be used together to represent ideas.

Using Mathematical Models

- Mathematical models help us represent real-world phenomena in terms of probabilities.

- These models enable us to analyze and draw conclusions based on available information.

Programming AI with Probability

- By incorporating probability into programming, we can develop AI systems that utilize probabilistic information for decision-making.

Possible Worlds and Distributions

This section delves into the concept of possible worlds and distributions within probability theory.

Possible Worlds Representation

- Possible worlds refer to different outcomes or states that a system can exist in.

- These worlds are represented mathematically using symbols like omega (Ω).

Dealing with Probabilities and Distributions

- Probabilities within a model can be represented using distributions.

- Python libraries like Pomegranate provide tools for working with these variables.

Defining Probability in Markov Models

Here, we explore how probability is defined and encoded in Markov models.

Syntax for Probability Representation

- Probability values are represented using the capital letter P followed by parentheses.

- The content within the parentheses represents the event or possible world being considered.

Encoding Information into Programs

- Probability information is encoded into Python programs to enable computations and analysis.

- The probability of a possible world is represented by the little Greek letter omega (ω).

Starting Distributions and Axioms of Probability

This section discusses starting distributions and basic axioms of probability.

Starting Distributions in Markov Models

- Every Markov model begins at a specific point in time.

- Starting distributions define the initial probabilities of different states or events.

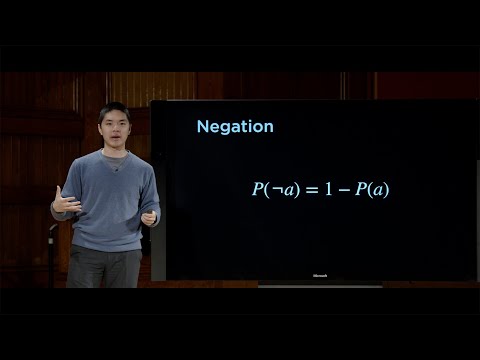

Axioms of Probability

- There are fundamental rules that govern probability calculations.

- One key rule is that probabilities must range between zero and one, inclusive.

Transitioning Between States in Markov Models

This section focuses on transitioning between states in Markov models.

Transition Model Definition

- Transition models describe how a system moves from one state to another over time.

- These models capture the probabilities associated with state transitions.

Example: Weather Transitions

- An example transition model can represent weather conditions transitioning from sunny to rainy or vice versa.

- Each transition has an associated probability value indicating its likelihood.

Sampling from a Markov Chain

Here, we explore sampling from a Markov chain to simulate instances of weather conditions.

Simulating Weather Instances

- Sampling from a Markov chain allows us to simulate different instances of weather conditions.

- The Markov chain library in Python provides methods for sampling.

Interpreting Probability Values

- Probability values determine the likelihood of specific events occurring.

- Higher probability values indicate a higher likelihood, while lower values indicate a lower likelihood.

Conclusion

This section concludes the discussion on Markov chains and their application in probability theory.

Applying Markov Models

- Markov models provide a framework for analyzing systems with dependencies on previous events.

- They can be used to answer questions and make predictions based on available information.

Markov Models and Sensor Models

In this section, the speaker discusses Markov models and sensor models in the context of probability and inference. The speaker explains how Markov models follow a distribution based on the sequence of values, such as sunny or rainy days. They also mention that knowing the values of individual states is crucial for Markov models. Sensor models are introduced as a way to translate observed information into probabilities related to the hidden state of the world.

Markov Models

- Markov models involve adding up individual values or probabilities to get some other value.

- These models rely on knowing the values of individual states.

- The speaker mentions that oftentimes, we add up a whole bunch of individual values or probabilities to get an overall result.

- The notation Σ (sigma) is used to represent summation in these models.

Sensor Models

- Sensor models help translate observed information into probabilities related to the hidden state of the world.

- The speaker explains that in practice, we often don't know the exact state of the world but can sense some information about it through sensors.

- Each probability in a sensor model represents the likelihood of an event occurring given certain observations.

- The probabilities range between zero and one, with zero meaning impossible and one meaning certain.

Example: Dice Rolls

- An example is given using dice rolls as a simple case for understanding sensor models.

- The probability of rolling a specific number on a fair die is equal for each outcome (e.g., 1/6).

- The observed data from sensors can provide information about what actually happens in relation to the hidden state.

Complex Models

- As our models become more complex, such as considering multiple dice or robot positions, reasoning about hidden states becomes more challenging.

- Multiple observations can influence and provide insights into hidden states.

Inferring Hidden States

In this section, the speaker discusses how to infer hidden states based on observations and sensor data. They provide examples of robot positions and voice recognition systems to illustrate the concept.

Inferring Robot Positions

- The speaker explains that a robot's true position is a hidden state that can be inferred using sensor data.

- The observed information about possible obstacles or distances helps determine the hidden state.

- The probability of the hidden state affects the observations made by the robot.

Voice Recognition Systems

- Voice recognition systems, like Alexa or Apple's voice recognition, rely on inferring hidden states from observed speech data.

- All possible worlds are considered to calculate probabilities related to different words or phrases being spoken.

Conclusion

This section concludes the discussion on Markov models and sensor models. It emphasizes the importance of understanding hidden states and using observed information to infer them.

Understanding Hidden States

- Knowing and understanding hidden states is crucial for modeling and inference.

- Sensor models help translate observed information into probabilities related to these hidden states.

Importance of Observations

- Observations provide valuable insights into hidden states, allowing us to make inferences about what is happening in the world.

Complexity in Modeling

- As models become more complex, reasoning about multiple observations and their influence on hidden states becomes more challenging.

Timestamps have been associated with bullet points as requested.

The True Nature of the World

This section discusses how the true nature of the world can be uncertain and how AI systems rely on observations to make inferences.

Uncertainty in Observations

- The AI system only has access to audio waveforms as observations.

- Different combinations of words and dice rolls are equally likely.

- Complex models can represent real-world scenarios, but certainty is not guaranteed.

Inference from Observations

- AI systems infer spoken words based on audio waveforms.

- Not every possible world is equally likely.

- Fair dice rolls have equal probabilities for each number.

Probability and Possible Worlds

- All possible worlds with fair dice rolls are considered equally likely.

- User engagement data provides information about user behavior but does not guarantee equal probabilities for all outcomes.

Inferring Hidden States

- AI systems often do not know the hidden true state of the world.

- Observations provide indirect information about the hidden state.

- Noise in observations makes it challenging to determine the hidden state with certainty.

Inferring Hidden State - Weather Example

This section uses a weather example to illustrate how observations can help infer hidden states.

Weather as Hidden State

- The hidden state represents whether it's sunny or rainy.

- An AI system inside a building has access to camera observations.

Using Observations to Predict Weather

- Umbrella presence indicates rain, while its absence suggests sunshine.

- Probabilities can be assigned based on possible outcomes (e.g., 1 out of 36 for a sum of 12).

Inferring Hidden State from Observations

- By analyzing camera observations, predictions about weather conditions can be made.

- Different probabilities exist for different outcomes based on observed data.

Inferring Hidden State - Building Example

This section presents a building example to further explain how observations can help infer hidden states.

Observations in a Building

- An AI system inside a building has access to observations about employees carrying umbrellas.

- Umbrella presence indicates rain, while its absence suggests sunshine.

Predicting Weather from Observations

- By analyzing umbrella-carrying behavior, predictions about weather conditions can be made.

- Different probabilities exist for different outcomes based on observed data.

Probability and Possible Worlds

This section emphasizes the concept of probability and its relationship with possible worlds.

Probability Calculation

- Probabilities are calculated based on the number of equally likely possible worlds.

- The sum of two dice rolls has 36 equally likely possible outcomes.

Probability Distribution

- Different sums have different probabilities based on the number of possible combinations.

- The probability of rolling a sum of 12 is 1 out of 36, while the probability of rolling a sum of 7 is higher.

Conclusion

The transcript discusses how AI systems rely on observations to make inferences about hidden states. It highlights that not all possible worlds are equally likely and that probabilities can be assigned based on observed data. The examples provided illustrate how observations can help predict weather conditions and make probabilistic calculations.

Understanding Probability with Multiple Dice

In this section, the speaker discusses how probability becomes more interesting when dealing with multiple dice. They explain the concept of possible worlds and how to reason about the probabilities of different outcomes.

Modeling Probabilities with Two Dice

- When considering two dice, we can imagine all the possible combinations of rolls as "possible worlds."

- Each die has six possible outcomes (numbers 1 to 6), resulting in a total of 36 equally likely possible worlds.

- The sums of the two rolls can vary, and not all sums are equally likely.

- For example, there are six possible combinations that result in a sum of seven, but only one combination that results in a sum of twelve.

Calculating Conditional Probabilities

- Conditional probability is the degree of belief in a proposition given some evidence that has already been revealed.

- It is represented as P(A|B), where A is the event we want to calculate the probability for and B is the known evidence.

- Conditional probability calculations are essential for AI systems that need to make predictions based on existing knowledge.

Examples of Conditional Probability

- An example scenario could be calculating the probability of rain today given that it rained yesterday.

- By incorporating additional evidence into our calculations, we can refine our understanding and make more accurate predictions.

Reasoning About Sums with Two Dice

In this section, the speaker explores how to reason about probabilities when considering the sum of two dice rolls. They discuss patterns and differences in probabilities based on different sums.

Possible Worlds and Sum Probabilities

- Not all sums are equally likely when rolling two dice.

- Some sums have multiple combinations (e.g., seven), while others have only one combination (e.g., twelve).

- The probability of a specific sum can be calculated by dividing the number of favorable outcomes by the total number of possible worlds.

Comparing Sum Probabilities

- The probability of rolling a sum of seven is higher than the probability of rolling a sum of twelve.

- The probability of rolling a sum of seven is 6/36, while the probability of rolling a sum of twelve is 1/36.

Conditional Probability in AI

- Conditional probabilities are crucial for AI systems to make informed decisions based on existing knowledge.

- They allow us to calculate the likelihood of an event given certain evidence, improving our ability to predict outcomes.

Unconditional and Conditional Probability

In this section, the speaker explains the difference between unconditional and conditional probabilities. They discuss how conditional probabilities are more relevant when dealing with AI systems that need to make predictions based on available evidence.

Unconditional Probability

- Unconditional probability refers to the degree of belief in a proposition without any additional evidence or knowledge.

- It represents our abstract understanding of the likelihood of an event occurring.

Conditional Probability

- Conditional probability considers additional evidence or knowledge when calculating the likelihood of an event.

- It represents our degree of belief in a proposition given some known information about the world.

Importance in AI Systems

- When training AI systems, conditional probabilities are more relevant as they allow for more accurate predictions based on available evidence.

- Understanding conditional probabilities helps AI systems reason and make informed decisions about real-world scenarios.

New Section

This section introduces the concept of random variables and probability distributions. It explains how random variables can have different possible values and how we can assign probabilities to each value.

Understanding Random Variables

- A random variable is a variable that can take on different values.

- The domain of values that a random variable can take on is called its "domain".

- Different random variables can have different probabilities for each possible value.

Probability Distributions

- A probability distribution represents the probabilities of each possible value of a random variable.

- The probability distribution for a random variable Flight, for example, could be represented as follows:

- On-time: 60%

- Delayed: 30%

- Canceled: 10%

- The sum of all probabilities in a probability distribution should be equal to 1.

Representing Probability Distributions

- Probability distributions can be represented using vectors.

- A vector is a sequence of values.

- For example, the probability distribution for Flight could be represented as [0.6, 0.3, 0.1], where the first value represents the probability of being on time, the second value represents the probability of being delayed, and the third value represents the probability of being canceled.

New Section

This section discusses how to interpret and represent probability distributions using concise notation. It also introduces the concept of independence in probability theory.

Concise Notation for Probability Distributions

- Probability distributions can be represented more concisely using angle brackets and vectors.

- For example, instead of writing out each individual probability like before, we could represent the Flight's probability distribution as P = <0.6, 0.3, 0.1>.

- Each value in the vector corresponds to a specific outcome or value of the random variable.

Independence in Probability Theory

- Independence refers to the idea that the probability of one event does not influence the probability of another event.

- In the context of rolling two dice, for example, the outcome of one die roll does not affect the probabilities of the other die roll.

- However, in some cases, events or random variables may not be independent. For example, cloudy weather might increase the probability of rain later in the day.

New Section

This section explores the mathematical definition and implications of independence in probability theory.

Mathematical Definition of Independence

- Mathematically, independence is defined using conditional probabilities.

- The probability of two independent events A and B occurring together is equal to the product of their individual probabilities: P(A and B) = P(A) * P(B).

- If A and B are independent, then knowing whether A occurs or not does not change the likelihood of B occurring.

Implications of Independence

- If two events are independent, their probabilities can be multiplied together to calculate joint probabilities.

- However, if two events are not independent, their probabilities may be influenced by each other.

- Understanding independence is important for accurately modeling and calculating probabilities in various scenarios.

The transcript provided a comprehensive explanation on random variables, probability distributions, concise notation for representing them, and independence in probability theory.

Independence of Events

This section discusses the concept of independence between events A and B.

Understanding Independence

- Events A and B are considered independent if the occurrence or non-occurrence of one event does not affect the probability of the other event.

- Independence can be determined by examining conditional probabilities.

Conditional Probability and Bayes' Rule

This section explains conditional probability and introduces Bayes' rule as a tool for calculating reverse conditional probabilities.

Conditional Probability Example

- Given data on rainy afternoons and cloudy mornings, we want to calculate the probability of afternoon rain given morning clouds.

- We know that 80% of rainy afternoons start with cloudy mornings, 40% of days have cloudy mornings, and 10% of days have rainy afternoons.

- Using Bayes' rule, we can express this as P(rain|clouds) = P(clouds|rain) * P(rain) / P(clouds).

- Substituting the values into the equation, we find that the probability is 0.2.

Application of Bayes' Rule

This section explores how Bayes' rule can be applied in various scenarios to calculate unknown probabilities based on known conditional probabilities.

Medical Test Example

- In medicine, we may know the probability of a medical test result given a disease.

- By using this information, we can calculate the likelihood that someone has the disease given their test result.

Counterfeit Bill Example

- If we know that a certain percentage of counterfeit bills have blurry text around the edges, we can calculate the probability that a bill is counterfeit given blurry text.

Joint Probability

This section introduces joint probability, which considers the likelihood of multiple events occurring simultaneously.

Understanding Joint Probability

- Joint probability involves considering the probability distribution of multiple random variables.

- By having access to the joint probabilities of different combinations of events, we can gain more information about how these variables relate to each other.

Example with Cloudy Mornings and Afternoon Rain

- Given separate probability distributions for cloudy mornings and afternoon rain, we can calculate the joint probabilities for each combination of values.

- This joint probability distribution provides information on the likelihood of specific combinations, such as both cloudy and rainy conditions occurring.

The transcript does not provide further sections or timestamps beyond this point.

New Section

In this section, the speaker discusses the concept of marginalization and how it can be used to calculate probabilities. They explain that marginalization allows us to determine the probability of one variable based on another variable, even if we don't have additional information about it.

Marginalization Rule

- The marginalization rule helps calculate the probability of a variable (e.g., A) using another variable (e.g., B) by considering two cases: A happens and B happens, or A happens and B doesn't happen.

- These cases are disjoint, meaning they cannot both occur together.

- The probability of A is calculated by adding up the probabilities of these two cases: P(A and B) + P(A and not B).

- This rule applies regardless of the relationship between variables A and B.

Marginalization with Random Variables

- When dealing with random variables, the marginalization rule involves summing up over all possible values that one variable can take on while considering the joint distribution with another variable.

- The rule states that the probability of random variable X being equal to a specific value xi is obtained by summing up over all possible values yj that random variable Y can take on.

- By adding up these individual joint probabilities, we can determine the unconditional probability of X taking on a particular value.

Applying Marginalization Rule

- To apply marginalization, we consider a joint distribution table for variables such as cloudy/not cloudy and rainy/not rainy.

- If we want to find the probability that it is cloudy, we use marginalization by considering whether it is raining or not raining.

- We sum up the probabilities for each case: P(cloudy and raining) + P(cloudy and not raining).

- By accessing the values in the joint probability table, we can calculate the unconditional probability of it being cloudy.

Marginalization for Conditional Probabilities

- The marginalization rule can also be applied when we have conditional probabilities instead of joint probabilities.

- If we want to find the probability of event A occurring, given that variable B has occurred, we consider two cases: B happens and A happens or B doesn't happen and A happens.

- We use conditional probabilities to calculate these cases: P(A|B) * P(B) + P(A|not B) * P(not B).

New Section

In this section, the speaker introduces the concept of conditioning and how it relates to conditional probabilities. They explain how conditioning allows us to calculate the probability of one event happening based on our knowledge of another event.

Conditioning Rule

- The conditioning rule is similar to the marginalization rule but uses conditional probabilities instead of joint probabilities.

- If we want to find the probability of event A occurring, given that event B has occurred, we consider two cases: B happens and A happens or B doesn't happen and A happens.

- We use conditional probabilities to calculate these cases: P(A|B) * P(B) + P(A|not B) * P(not B).

Conclusion

The transcript discusses two important rules in probabilistic reasoning - marginalization and conditioning. Marginalization allows us to determine the probability of one variable based on another variable by considering different cases. Conditioning helps calculate the probability of an event happening based on our knowledge of another event using conditional probabilities. These rules are valuable tools in probabilistic calculations and provide insights into understanding joint distributions and conditional probabilities.

New Section

In this section, the speaker discusses the probability distribution and conditional probability distribution in a Bayesian network.

Probability Distribution of Rain Random Variable

- The probability distribution of the Rain random variable is not conditional and is based on a probability distribution over possible values.

- The speaker provides an example of a probability distribution for the Rain random variable, with values such as "no rain," "light rain," and "heavy rain."

Conditional Probability Distribution of Maintenance Node

- The Maintenance node represents track maintenance and its likelihood depends on the intensity of rain.

- A conditional probability distribution is used to represent the relationship between the Rain random variable and the Maintenance node.

- The speaker explains that heavier rain makes it less likely for there to be track maintenance.

Conditional Probability Distribution of Train Node

- The Train node represents whether the train is on time or delayed.

- Its value depends on both the Maintenance node and the Rain random variable.

- A larger conditional probability distribution is constructed, considering both variables' values to determine whether the train is on time or delayed.

Influence on Appointment Node

- The Appointment node represents whether one attends or misses an appointment.

- It indirectly depends on track maintenance because if there is maintenance, it increases the likelihood of train delays, which may result in missing appointments.

- However, only direct relationships are encoded in this Bayesian network.

New Section

In this section, further details about conditional probability distributions in a Bayesian network are discussed.

Conditional Probability Distribution for Train Node Continued

- A conditional probability distribution for the Train node is presented based on whether it's on time or delayed.

- If the train is on time, there's a higher likelihood of attending a meeting. If delayed, there's a higher likelihood of missing it.

Summary of Bayesian Network Structure

- The Bayesian network consists of multiple nodes representing random variables.

- Each node is associated with a probability distribution, either unconditional or conditional.

- Arrows between nodes indicate dependencies and allow for calculating probabilities based on parent values.

Computation and Calculation

- With the information provided in the Bayesian network structure, computations and calculations can be performed to determine probabilities and make predictions.

New Section

This section focuses on the construction and interpretation of a Bayesian network.

Construction of Bayesian Network

- The structure of a Bayesian network is described, including nodes representing random variables and their relationships.

- Probability distributions are associated with each node, allowing for probabilistic reasoning.

Relationships Between Nodes

- Arrows between nodes represent dependencies, indicating how one variable influences another.

- Conditional probability distributions capture these relationships by considering parent values.

Importance of Node Ordering

- Intelligently ordering the nodes in a Bayesian network is crucial to accurately represent dependencies and influence among variables.

- Determining which factors influence other nodes helps construct an effective Bayesian network.

Influence on Appointment Node Continued

- The Appointment node's value depends on direct influences such as the Train node's status (on time or delayed).

- Knowing whether there's track maintenance doesn't provide additional information beyond what's already known from the Train node.

New Section

This section concludes the discussion on constructing a Bayesian network and its implications.

Representation of Nodes in Bayesian Network

- Each node in the Bayesian network is associated with a probability distribution that reflects its relationship with other nodes.

- Unconditional probability distributions are used for root nodes like Rain, while conditional probability distributions consider parent values.

Relationship Between Variables

- The arrows between nodes indicate dependence, allowing for calculation of probabilities based on parent values.

Conclusion: Probabilistic Reasoning in Bayesian Networks

- Bayesian networks provide a framework for probabilistic reasoning and modeling dependencies between random variables.

- By constructing the network structure and assigning appropriate probability distributions, computations can be performed to make predictions and infer probabilities.

Extracting Probability of Light Rain

In this section, the speaker discusses extracting the probability of light rain from a conditional probability table.

Extracting Probability of Light Rain

- The variable for track maintenance is dependent on the rain variable, creating a conditional distribution.

- The speaker encodes the same information as represented graphically using Python programming language and conditional probability distributions.

- Different distributions are defined for no rain, light rain, and heavy rain.

- The Bayesian network model is constructed by defining states (rain, maintenance, train, appointment) and adding edges between related nodes.

- Pomegranate library is used as an example to implement Bayesian networks.

Conditional Distribution in Bayesian Network

This section explains how conditional distributions are used in a Bayesian network to represent probabilities based on different conditions.

Conditional Distribution in Bayesian Network

- Conditional probability tables are used to define probabilities based on specific conditions within a Bayesian network.

- The speaker demonstrates an example with the train node that depends on both rain and track maintenance variables.

- Probabilities are assigned to different combinations of parent values for the train node.

- Similar distributions can be defined for other random variables in the network.

Encoding Information in Python Program

This section focuses on encoding conditional probability distributions in a Python program using libraries like Pomegranate.

Encoding Information in Python Program

- The speaker uses Python programming language to encode conditional probability distributions into a Bayesian network model.

- The syntax may vary depending on the library used, but the key idea is designing a library for general Bayesian networks with nodes based on their parents' values.

Defining Probability Distributions for Nodes

This section explains how to define probability distributions for nodes based on the values of their parents in a Bayesian network.

Defining Probability Distributions for Nodes

- The speaker demonstrates how to define probability distributions for the train node based on different combinations of parent values (rain and maintenance).

- Similar distributions can be defined for other nodes in the network.

- The goal is to specify the distribution that each node should follow based on its parents' values.

Constructing the Bayesian Network

This section explains how to construct a Bayesian network by defining states and adding edges between dependent nodes.

Constructing the Bayesian Network

- The speaker constructs a Bayesian network by describing the states of the network (rain, maintenance, train, appointment) and adding edges between related nodes.

- Rain influences track maintenance, maintenance influences train, and train influences whether one makes it to an appointment.

- The final step involves finalizing the model and performing additional computations.

Libraries for Bayesian Networks

This section discusses libraries like Pomegranate that can be used for implementing Bayesian networks.

Libraries for Bayesian Networks

- Pomegranate is one example of several libraries available for implementing Bayesian networks.

- These libraries provide tools and functions to design and work with general-purpose Bayesian networks.

- Programmers can define nodes, probability distributions, and perform logic based on these libraries.

Calculating Joint Probability Using Python Library

This section demonstrates how to calculate joint probabilities using a Python library with a given observation.

Calculating Joint Probability Using Python Library

- The speaker uses a Python library (not specified) to calculate joint probabilities based on a given observation.

- An example calculation is shown where the probability of no rain, no track maintenance, on-time train arrival, and attending a meeting are calculated.

Probability Calculation Example

This section provides an example of calculating the probability of a specific scenario using the Python library.

Probability Calculation Example

- The speaker calculates the probability of a specific scenario: no rain, no track maintenance, on-time train arrival, and attending a meeting.

- The probability is approximately 0.34, indicating that this scenario occurs about one-third of the time.

Probability Calculation for Missing Appointment

This section demonstrates calculating the probability of missing an appointment despite favorable conditions.

Probability Calculation for Missing Appointment

- The speaker calculates the probability of missing an appointment despite having no rain, no track maintenance, and an on-time train arrival.

- The probability is approximately 0.04, indicating that this scenario occurs about 4% of the time.

Joint Probability Calculation in Bayesian Networks

This section explains how joint probabilities are calculated in Bayesian networks by considering multiple variables and their dependencies.

Joint Probability Calculation in Bayesian Networks

- Joint probabilities in Bayesian networks involve considering multiple variables and their dependencies.

- The library likely calculates probabilities step-by-step based on conditional probabilities encoded in the network.

Inference Problems in Bayesian Networks

This section discusses solving inference problems in Bayesian networks to infer the likelihood of other variables based on observed evidence.

Inference Problems in Bayesian Networks

- Solving inference problems involves inferring the likelihood of other variables based on observed evidence within a Bayesian network.

- A Python program is used to pass observed evidence (e.g., delayed train) into the model and make predictions about other variables' values.

Making Predictions Using Evidence

This section demonstrates making predictions about other variables' values based on observed evidence using a Python program.

Making Predictions Using Evidence

- The speaker uses a Python program to make predictions about other variables' values based on observed evidence (e.g., delayed train).

- The computational logic is performed in just a few lines of code, where the model and evidence are passed into a prediction function.

- The results are then printed out for visualization.

Probability Sampling and Weighting

In this section, the speaker discusses probability sampling and weighting in the context of Bayesian networks.

Probability Sampling and Weighting

- The speaker explains that when sampling non-evidence variables in a Bayesian network, each sample should be weighted by its likelihood based on the probability of all the evidence.

- Previously, all samples were weighted equally, but now each sample is multiplied by its likelihood to obtain a more accurate distribution.

- The process involves fixing the values of evidence variables and sampling the other non-evidence variables.

- The weight of each sample is determined by how likely it is for the evidence to occur given the values of other variables.

- By weighting samples according to their likelihood, a more accurate inference can be made.

Fixing Evidence Variables in Sampling

This section focuses on fixing evidence variables during the sampling procedure in Bayesian networks.

Fixing Evidence Variables in Sampling

- Instead of sampling everything, evidence variables are fixed with their known values.

- Non-evidence variables are then sampled using the Bayesian network's probability distributions.

- This approach ensures that only samples with known evidence values are considered, making the inference more accurate.

Likelihood Weighting

Likelihood weighting is introduced as a method to weight samples based on their likelihood in Bayesian networks.

Likelihood Weighting

- After sampling non-evidence variables, each sample is weighted by its likelihood.

- Likelihood is defined as the probability of all evidence given a particular sample.

- Samples with more likely evidence have higher weights assigned to them.

- This weighting process improves accuracy compared to treating all samples equally.

Weighting Samples by Likelihood

The speaker explains how samples are weighted based on the likelihood of evidence in Bayesian networks.

Weighting Samples by Likelihood

- Previously, all samples were given equal weight when calculating the overall average.

- Now, each sample is multiplied by its likelihood to obtain a more accurate distribution.

- The weight of a sample is determined by the probability of evidence occurring given the values of other variables.

- By assigning weights based on evidence likelihood, a more precise inference can be made.

Sampling Procedure with Fixed Evidence Variables

This section describes the sampling procedure with fixed evidence variables in Bayesian networks.

Sampling Procedure with Fixed Evidence Variables

- When performing the sampling procedure, evidence variables are fixed with their known values.

- Only non-evidence variables are sampled to generate a sample.

- The example given involves fixing the train being on time as evidence and sampling other variables like rain and track maintenance.

- Once a sample is generated, it needs to be weighted based on the likelihood of the evidence observed.

Generating and Weighting Samples

This section explains how samples are generated and weighted in Bayesian networks.

Generating and Weighting Samples

- To generate a sample, fix evidence variables and sample non-evidence variables.

- After generating a sample, it needs to be weighted based on the likelihood of observed evidence.

- The weight assigned to each sample depends on how probable it is for the evidence to occur given other variable values.

Sampling Methods for Inference

Different sampling methods for approximating inference in Bayesian networks are discussed.

Sampling Methods for Inference

- Various sampling methods exist to approximate inference in Bayesian networks.

- These methods aim to approximate the process of determining the value of a variable.

- Likelihood weighting is one such method, but there are others as well.

Dealing with Values Over Time

This section introduces the concept of dealing with values that change over time in Bayesian networks.

Dealing with Values Over Time

- In some cases, it is necessary to consider how values of variables change over time in Bayesian networks.

- The speaker mentions weather as an example where values may vary over different time steps.

- To address this, a random variable can be defined for each possible time step, allowing for analysis of uncertainty over time.

Simplifying Assumptions and Markov Assumption

Simplifying assumptions and the Markov assumption are discussed as helpful tools in analyzing Bayesian networks.

Simplifying Assumptions and Markov Assumption

- When dealing with complex problems like analyzing weather data over long periods, simplifying assumptions are often necessary.

- The Markov assumption states that the current state depends only on a finite fixed number of previous states.

- Instead of considering all past weather data, predictions can be made based on a limited number of previous days' weather.

Introduction to Hidden Markov Models

In this section, the speaker introduces the concept of Hidden Markov Models (HMM) and explains how they can be used to represent real-world scenarios involving hidden states and observed emissions.

Understanding the Markov Assumption

- HMM is an example of representing scenarios where the Markov assumption holds.

- The Markov assumption assumes that the current state only depends on the previous state.

- In HMM, there are underlying states that represent the true nature of the world, and emissions or observations that are produced based on these states.

Tasks in Hidden Markov Models

- Various tasks can be performed using HMM based on conditional probabilities and probability distributions.

- Filtering: Calculate the distribution for the current state given observations from the start until now.

- Prediction: Predict future states based on past observations.

- Smoothing: Calculate distributions for past states given observations from start until now.

- Most likely explanation: Determine the most likely sequence of states based on observations.

Applications of Hidden Markov Models

This section explores some possible applications of Hidden Markov Models and their relevance in various tasks such as voice recognition.

Voice Recognition

- HMM can be used in voice recognition to determine the most likely sequence of words or sounds spoken by a user based on audio waveforms.

- By analyzing observed emissions, HMM can predict sequences of actual words or syllables spoken by a user.

Building a Hidden Markov Model

Here, we learn about building a Hidden Markov Model using Python. The speaker demonstrates how to define possible states, emission probabilities, transition matrix, starting probabilities, and create a model for inference.

Defining States and Emission Probabilities

- Define possible states (e.g., sunny and rainy) along with their emission probabilities (e.g., probability of seeing people with or without an umbrella).

- Emission probabilities represent the likelihood of observing a particular emission given a state.

Transition Matrix and Starting Probabilities

- Define a transition matrix to specify the likelihood of transitioning from one state to another.

- Assign starting probabilities to determine the initial likelihood of each state.

Creating the Model

- Use the defined information to create a Hidden Markov Model for inference.

Predicting Hidden States

In this section, we explore how Hidden Markov Models can be used to predict hidden states based on observed emissions.

Prediction Process

- Provide a list of observations (e.g., umbrella, no umbrella) to the model.

- The model predicts the most likely explanation for these observations by determining the sequence of hidden states that best matches the observations.

Example Prediction

- Given a sequence of observations (umbrella, umbrella, no umbrella, etc.), predict the most likely sequence of hidden states (rain, rain, sun, etc.).

- The prediction is based on the underlying probabilities and transitions defined in the Hidden Markov Model.

Conclusion

Hidden Markov Models are powerful tools for representing scenarios involving hidden states and observed emissions. They can be applied in various tasks such as filtering, prediction, smoothing, and determining most likely explanations. By understanding how to build and use Hidden Markov Models, we can make accurate predictions based on observed data.

Formulating Ideas as Hidden Markov Models

In this section, the speaker discusses the concept of formulating ideas as Hidden Markov Models.

Formulating Ideas as Hidden Markov Models

- The speaker suggests that any idea can be formulated as a Hidden Markov Model.

- Using Hidden Markov Models allows for a structured representation of ideas.

- This approach can help in understanding and analyzing complex systems or processes.

- By formulating ideas as Hidden Markov Models, it becomes easier to identify patterns and make predictions based on observed data.

Timestamps are not available for the remaining content of the transcript.